I'd rather hook my mom up but she lives 200 feet from sanity.

Plug in solar just became legal in #Virginia. Pondering who to give my spare 450 watt solar panel to, along with a $250 microinveter like the APsystems EZ1-LV-NA

tempting

At the point of permantly closing off communication and cutting ties with people who continue to inflict AI slop on me in bug reports etc.

This will have financial consequences for me, and probably reduces the probability I am able to continue working on free software in the medium term, but so be it.

The probability I would irreparably burn out if I don't do this is 100%.

scoping out where to hang the hammock in the shade the lazy way, by checking the graphs of nearby solar panel arrays

re #Conservancy's Eternal November post, pro/anti-AI sentiment seems to increasingly split along the same lines as political affiliation in the US. So a meta strategic consideration is that Free Software needs to avoid a political party affiliation split or risks serious losses in its reach.

Of course this has been a consideration going well back to CoC discourse and as with that it does not make sense for the free software community to tolerate every behavior a human is capable of.

https://sfconservancy.org/blog/2026/apr/15/eternal-november-generative-ai-llm/

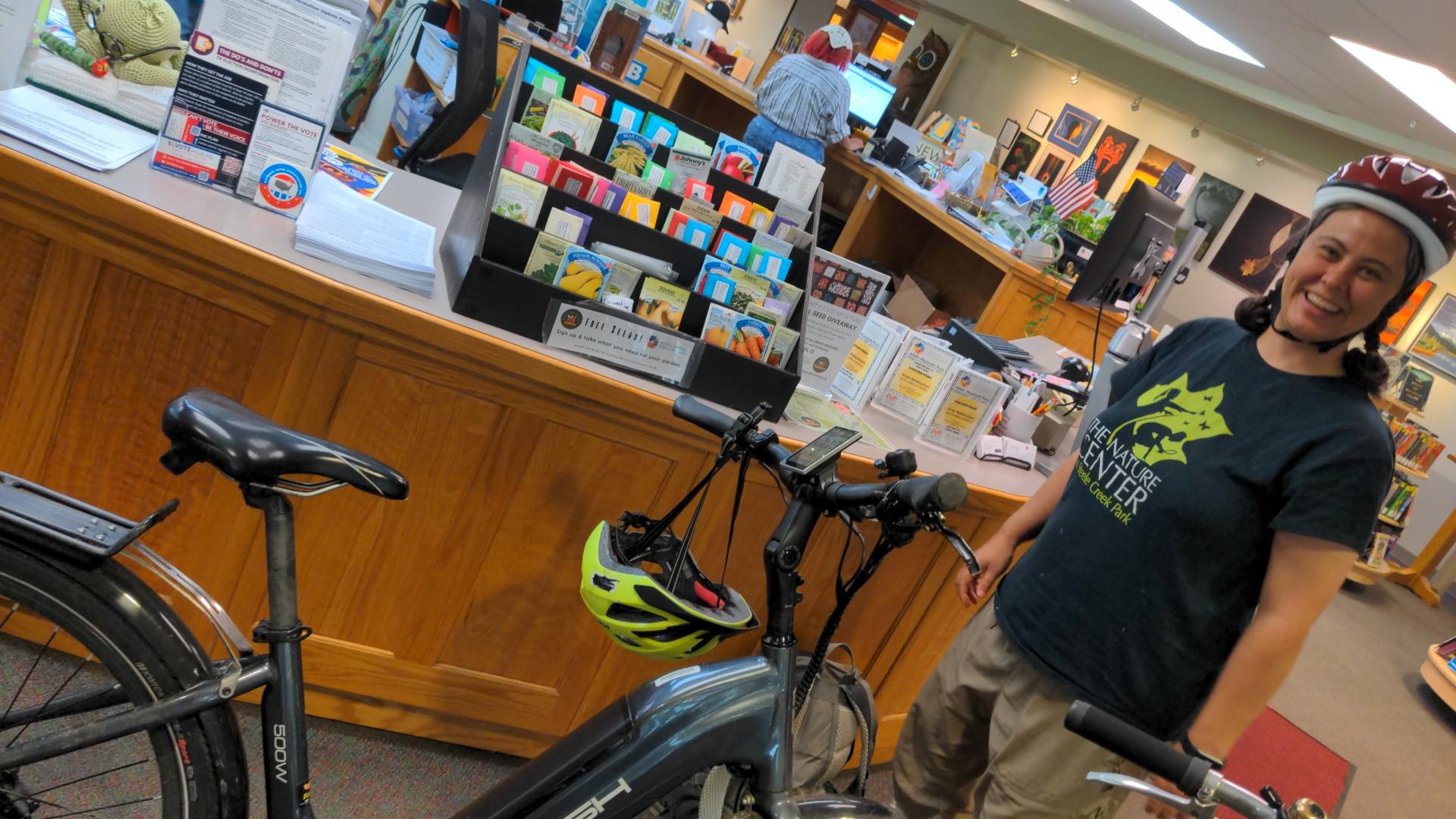

At a really good library. Charging my car as well here

on an overnight trip to listen to a hot water heater

Just saw my 1st swift!!!!!!

I might have caught early lyme disease so im now on 2 antibiotics

If you ever see a bulls eye on your skin consult a doctor. This is the second time ive had this disease. Everything happens for a reason.

Got my car charging rate to match incoming #solar power. There are products that do this, but I just wrote some #haskell code https://git.joeyh.name/index.cgi/joey/house.git/commit/?id=25834a4421769c5e4a427985f0cffe04f315e3ab

It will mostly do this in the evening to avoid ending the day with the house battery too low. Earlier in the day it makes more sense to keep charging at high power and drain the house battery slightly, since higher power car charging is much more efficient.

Meanwhile, I have to mention that my dad quietly with no fuss got a contractor to drop another solar array into his field, rivaling my arrays. And has ground source whole house HVAC+water. And is getting a grid connected whole house battery. I think he wins. (Edit: But no BEV!)

Hope the oil crisis and climate change are treating you well, dear readers.

The heat pump will use heat generated by the inverter that is running the heat pump and charging the car. And it will cool the inverter to reduce its fan needs. The new mech room is going to have some fun duct work and noise insulation.

I'd say this really completes the solar upgrade I started 2 years ago, but *shrug*, I'm planning to drop in a heat pump hot water heater soon, and induction stove remains on the wishlist.

Until I have been able to see the entire solar curve of my solar fields on a sunny day, without it being clipped at 1 pm due to there being more power than anything can use, can I call the solar upgrade really complete?

My #offgrid #EV charging speed has more than doubled. Still L1 charging, but now at 16A (on a 20A outlet) rather than on a slow extension cord, and I don't need any more than that. The car can charge about 30% per day now from solar power (including on cloudy days).

Had to start a new mechanical room on the other side of the bathroom from the current closet for an inverter upgrade. That and running buried conduit to the woodshed made this a lot of work.

accidentially drew a fish skeleton in my house's load plots this morning while doing some meal prep followed by a ramp up test of improved EV charging

https://joeyh.name/blog/entry/banning_all_Anthropic_employees/

Per my policies, I need to ban every employee and contractor of Anthropic Inc from ever contributing code to any of my projects. Anyone have a list?

Any project that requires a Developer Certificate of Origin or similar should be doing this, because Anthropic is making tools that explicitly lie about the origin of patches to free software projects.

UNDERCOVER MODE — CRITICAL

You are operating UNDERCOVER in a PUBLIC/OPEN-SOURCE repository. [...] Do not blow your cover.

NEVER include in commit messages or PR descriptions:

[...] The phrase 'Claude Code' or any mention that you are an AI

Co-Authored-By lines or any other attribution

-- via @vedolos

List of feeds:

- Anna and Mark: Waldeneffect: last checked (4610 posts)

- Anna and Mark: Wetknee: last checked (46 posts)

- Joey: last checked (231 posts)

- Joey devblog: last checked (271 posts)

- Joey short: last checked (1679 posts)

- Jay: last checked (50 posts)

- Errol: last checked (53 posts)

- Maggie: last checked (51 posts)

- Tomoko: last checked (77 posts)

- Jerry: last checked (28 posts)

- Dani: last checked (30 posts)